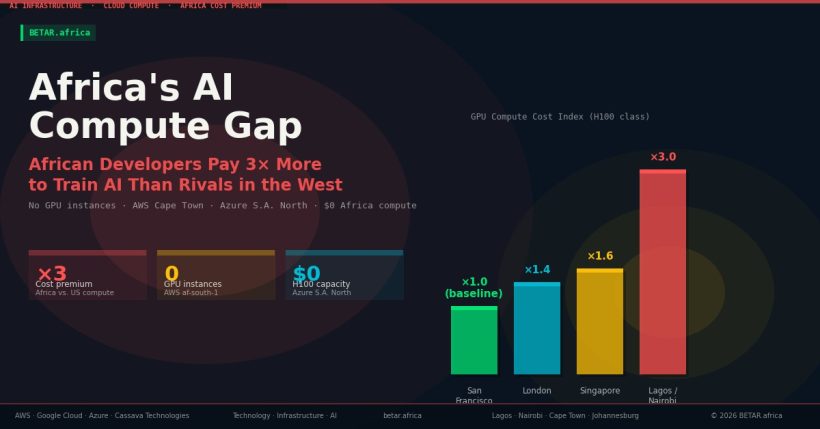

African developers who want to train or fine-tune AI models cannot do it in Africa. There are no GPU instances in AWS’s Cape Town region. Google Cloud’s Johannesburg region, launched in 2025, offers limited accelerated computing capacity. Azure’s South Africa North region has comparable gaps. The practical consequence: an AI startup in Lagos or Nairobi pays a composite premium — routing, currency risk, egress costs, and on-demand mark-ups — that can reach three times what a comparable San Francisco team pays for equivalent compute.

The infrastructure gap is not a theoretical future problem. It is the present operating reality for every African team attempting to build AI systems at the model layer. And it is generating a quiet contradiction at the heart of Africa’s AI sovereignty narrative: a continent staking its technological future on AI cannot currently train that AI on its own soil, at any competitive price.

The Infrastructure Audit: What Exists, and What Does Not

AWS opened its Africa (Cape Town) region — af-south-1 — in April 2020. It was a significant moment for cloud infrastructure on the continent, providing South African enterprises with local compute for the first time. Six years on, af-south-1 offers general purpose, compute-optimised, memory-optimised, and storage-optimised instance types. It does not offer a single accelerated computing instance. There are no P5, G6, G5, or Inf2 instances available in Cape Town. Developers who need GPU compute must route their workloads to AWS regions in Europe, the United States, or Asia Pacific.

Google Cloud’s situation is marginally better and worsening in perception as the gap widens elsewhere. Google launched its first African cloud region — africa-south1, in Johannesburg — in 2025. The Johannesburg region provides general compute and managed database services, but GPU availability for AI training workloads has not been confirmed at scale. The H100-class instances that now anchor serious LLM training — Google’s A3 High GPU clusters configured with 8× NVIDIA H100s — are available in us-central1, europe-west4, and Asia Pacific nodes. Africa is not on that map.

Microsoft Azure has operated South Africa North (Johannesburg) and South Africa West (Cape Town) regions since 2019. Azure’s ND H100 v5 instances, its flagship GPU offering for AI training, are available in East US, West Europe, and a handful of Asia Pacific regions. Sub-Saharan Africa is excluded.

The one substantive exception to this pattern is Cassava Technologies — the parent company of Liquid Intelligent Technologies. In 2025, Cassava established what it describes as Africa’s first network GPU-as-a-Service offering, operating as NVIDIA’s first preferred cloud partner on the continent. Its GPU infrastructure spans data centres in South Africa, Nigeria, Kenya, Egypt, and Morocco. For African developers, Cassava represents the only meaningful option to access GPU compute within their own region — though at capacity and price points that still cannot absorb continent-scale AI training demand.

The Cost Gap: A Worked Example

Consider two AI startups, each fine-tuning a 7-billion-parameter open-source language model on a custom dataset of 200,000 examples. One team is based in San Francisco. The other is in Lagos.

The San Francisco team provisions an AWS p3.16xlarge instance (8× NVIDIA V100 GPUs) in us-east-1 at $24.48 per hour on-demand, or opts for a spot instance at roughly $7–10 per hour when available. Training runs for approximately 200 GPU-hours. Their all-in compute cost on demand: approximately $4,900. On spot: under $2,000. Data transfer costs within the US region are negligible — AWS charges $0.00 per GB for intra-region S3 transfers.

The Lagos team faces a different calculation. They cannot use af-south-1: no GPU instances exist there. They must provision in eu-west-1 (Ireland) or us-east-1. Before training begins, their 50GB training dataset must move from Lagos to an S3 bucket in Europe or the US — at AWS egress rates that apply to data leaving Africa. During training, each checkpoint write and gradient sync adds latency that compounds across 200 hours of work, reducing effective GPU utilisation. Model outputs — tens of gigabytes of checkpoints — must then be retrieved from the remote region, incurring further transfer costs.

Beyond direct compute, the Lagos team absorbs costs that do not appear on AWS invoices: currency conversion from naira to US dollars at commercial bank rates that typically run 3–5 per cent above interbank rates, and exposure to naira depreciation across a multi-week training run. For a startup that raised funding in naira or earns local-currency revenue, USD-denominated cloud bills have grown materially more expensive as the naira has weakened. Aggregated across compute premiums, egress costs, latency-induced inefficiency, and currency exposure, African AI teams consistently report a composite cost multiplier of two to three times their US-based peers for equivalent workloads — a figure the World Economic Forum validated in its December 2025 green compute analysis, which estimated that competitive local compute could unlock $1.5 trillion in African economic value.

Who Is Trying to Change This — and What They Have Actually Delivered

The announcements have not been small. In February 2026, the African Development Bank and UNDP launched the AI 10 Billion Initiative at the Nairobi AI Forum — a commitment to mobilise up to $10 billion by 2035, with compute expansion as one of five core enablers alongside data, skills, trust, and capital. That same month, the African Union Commission signed a landmark MoU with Google at AU Headquarters in Addis Ababa, listing AI-ready cloud infrastructure expansion as a priority area. Microsoft, for its part, has committed $1 billion alongside G42 to a geothermal-powered data centre campus in Kenya that will expand to 100MW of capacity, with an East Africa cloud region announced alongside it.

These commitments are real. They are also, at this stage, largely prospective.

The AfDB initiative’s ignition phase runs through 2027. The Kenya Microsoft-G42 data centre is expected to reach initial capacity in approximately two years. The AU-Google MoU outlines priority areas; it does not specify GPU cluster deployments or timelines for accelerated computing availability in African cloud regions. None of these announcements has yet produced a single H100 instance available to an African developer inside an AWS or GCP African region.

The delta between announced and delivered is not a reason for cynicism. Infrastructure takes time to build, and capital commitments of this scale represent genuine political and commercial intent. But it is a reason for precision when describing Africa’s AI compute landscape to founders, researchers, and policymakers making decisions now. The pipeline is real. The present reality is a structural gap.

The Sovereignty Paradox

In February 2026, Google launched WAXAL — an open-source speech dataset covering 21 African languages, owned by African academic and community partners, freely available on Hugging Face. WAXAL was widely celebrated as a breakthrough for African AI sovereignty: data collected by Africans, owned by Africans, released to the world. The dataset’s custodians include Makerere University in Uganda, the University of Ghana, and Digital Umuganda in Rwanda — institutions that had full control over collection practices and licensing.

But to train a speech model on WAXAL at scale, an African developer must rent compute from a US hyperscaler in a US or European data centre. The data is sovereign. The compute is not.

This is the structural tension at the centre of Africa’s AI sovereignty discourse. Sovereignty at the data layer is achievable and being actively pursued — through WAXAL, through continental data governance frameworks, through the AU’s Digital Transformation Strategy. Sovereignty at the compute layer — the ability to train, fine-tune, and run AI models without routing workloads and costs through foreign jurisdictions — remains aspirational. The political framing around African AI sovereignty often conflates the two, obscuring the deeper dependency that the compute gap represents.

For Africa’s AI researchers and startups, the implication is practical rather than philosophical: every foundation model fine-tuned on African data, for African use cases, in African languages, is currently built on compute that is priced, governed, and physically located outside Africa. Until that changes, African AI development is structurally dependent on hyperscaler pricing decisions, GPU allocation policies, and data centre investment timelines that African stakeholders do not control.

The infrastructure announcements of early 2026 suggest that the gap is being taken seriously, at scale, for the first time. Whether the delivery timelines match the urgency — and whether the $10 billion mobilisation produces actual GPU capacity in African cloud regions, rather than primarily training programmes and governance frameworks — is the question that Africa’s AI builders are watching most closely.

GPU Availability and Pricing: Africa vs. Global Hyperscaler Regions

| Provider | African Region | GPU Instances in Africa | Nearest GPU Region | H100 On-Demand (per GPU-hr) | GPU Available in Africa? |

|---|---|---|---|---|---|

| AWS | af-south-1 (Cape Town) | None | eu-west-1 (Ireland) | ~$12.28 (p5.48xlarge, 8×H100) | No |

| Google Cloud | africa-south1 (Johannesburg) | Not confirmed at scale | europe-west4 (Netherlands) | ~$11.06 (A3 High, 8×H100) | No |

| Azure | South Africa North (Johannesburg) | None (no ND H100 v5) | East US / West Europe | ~$29.72 (ND H100 v5, per GPU) | No |

| Cassava / Liquid | SA, Nigeria, Kenya, Egypt, Morocco | Yes (GPUaaS, NVIDIA-powered) | On-continent | Not publicly listed | Yes — limited capacity |

Sources: AWS EC2 instance types by region; Google Cloud GPU regions documentation; Azure ND H100 v5 pricing pages; Cassava Technologies GPU-as-a-Service announcement. On-demand pricing as of Q1 2026.