Africa’s AI Regulation by Default: How Data Protection Law Is Doing the Work AI Law Doesn’t

Across most of the continent, governments have no AI-specific legislation. Data protection frameworks are filling the gap — but the fit is imperfect, the enforcement is uneven, and the gaps are growing as AI adoption accelerates.

When a Nigerian fintech’s AI credit-scoring model wrongly denies a loan application, which law applies? When a Ghanaian hospital uses an AI diagnostic tool that misclassifies a patient’s condition, what regulatory body investigates? When a South African insurer’s AI underwriting system applies a proxy variable that disproportionately disadvantages Black applicants, what is the enforcement pathway?

In most African jurisdictions, the answer is the same: the applicable law is a data protection statute written before large language models existed, before algorithmic credit decisions became routine, and before AI-generated content entered the misinformation economy. These laws were designed to govern how personal data is collected, stored, and used — not how automated systems make consequential decisions at scale. They are doing AI regulation by default, because no alternative exists.

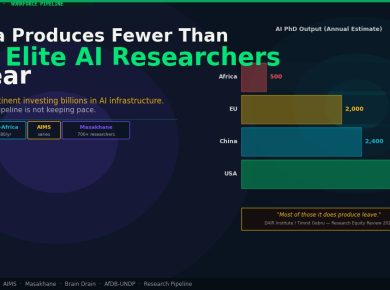

That is the continent’s current reality. Five African countries are moving toward dedicated AI governance frameworks. The other 49 are not.

The Map: Who Has What

The dividing line in African AI governance is not between countries that understand AI risk and those that don’t. It is between countries that have acted legislatively and those that haven’t had the institutional bandwidth to do so.

Nigeria’s National Digital Economy and E-Governance Bill — the continent’s most comprehensive AI law — is awaiting presidential assent after clearing both chambers of the National Assembly in late 2025. It establishes a risk-tier classification system, mandatory pre-deployment registration for high-risk AI, and a National AI Council housed within NITDA. Nigeria is building an AI regulatory architecture from the ground up.

Kenya tabled its Artificial Intelligence Bill 2026 in the Senate in February, proposing a standalone AI Commissioner with criminal enforcement powers — including prison terms for deploying high-risk AI without approval. South Africa’s National AI Policy Framework has cleared Cabinet and is moving toward a 60-day public consultation, embedding AI governance within existing sector regulators (FSCA, POPIA’s Information Regulator, ICASA) rather than creating a new body. Morocco operationalised its Digital X.0 framework in late 2025, moving AI governance through parliamentary channels. Rwanda has published an AI governance framework alongside its CBDC policy, signalling a dual-track regulatory push.

That is five countries out of 54. The other 49 — including Ghana, Senegal, Tanzania, Uganda, Ethiopia, Mozambique, Zambia, and Cameroon — are operating without any AI-specific regulatory instrument. In these markets, data protection law is the closest available framework. In several cases, it is doing more regulatory work than its drafters ever intended.

How DPAs Became AI Regulation

Data protection frameworks contain several provisions that, stretched and interpreted, function as AI constraints. The mechanism is not perfect, but it is not nothing.

Automated decision rights. South Africa’s POPIA Section 71 grants data subjects the right to object to decisions made solely through automated processing — and the right to request human review of such decisions. This is, in practice, an AI explainability and human oversight requirement. It doesn’t require pre-deployment registration or risk-tier classification, but it does impose a structural constraint on fully automated systems that affect individuals. South Africa’s Information Regulator has begun signalling that Section 71 is operative and enforceable, not merely aspirational.

Purpose limitation. Nigeria’s Nigeria Data Protection Regulation (NDPR) and the successor NDPA require that personal data be collected for specified, explicit, and legitimate purposes, and not further processed in ways incompatible with those purposes. Training an AI model on customer data collected for one purpose — loan applications, say — and repurposing that data to train a fraud-detection model or creditworthiness classifier without fresh consent is a potential NDPR violation. The Nigeria Data Protection Commission’s enforcement posture, which has accelerated since 2024, makes this a live compliance risk rather than a theoretical one.

Consent architecture. Kenya’s Data Protection Act 2019 requires that consent for data processing be specific, informed, and freely given. Where AI systems are trained on or process personal data — which describes most commercially deployed AI in Africa — the DPA’s consent requirements impose upstream discipline on what data can be lawfully used. Ghana’s Data Protection Act 2012, one of Africa’s earliest, operates similarly, requiring that data subjects understand what their data will be used for. Using personal data to train a generative AI model almost certainly exceeds the scope of consent gathered for a service interaction.

These mechanisms are real. They constrain AI deployment in meaningful ways. But they were designed for a pre-AI data economy, and the structural gaps they leave are widening as AI systems grow more capable.

What DPAs Cannot Do

The most significant gap is risk-tier classification. AI systems exist on a spectrum of potential harm — from a music recommendation algorithm to an AI-powered pretrial risk assessment tool. Data protection frameworks treat all personal data processing as roughly equivalent in terms of procedural requirements. They have no mechanism to identify that a credit-scoring AI poses categorically different societal risk than a spam filter, and to apply proportionately different oversight to each.

The EU AI Act’s innovation — and Nigeria’s AI Bill’s adoption of the same logic — is the risk pyramid: prohibited systems at the top, high-risk systems requiring mandatory oversight below, and general-purpose AI systems with lighter obligations at the base. Without that architecture, regulators in DPA-only jurisdictions have no principled basis for prioritising enforcement attention on the AI systems that matter most.

Liability is a second structural gap. When an AI system causes harm — a misdiagnosis, a biased hiring decision, a financial loss triggered by a hallucinated model output — data protection law can address only the data-processing dimension of that harm. It cannot assign liability for the model’s design choices, its training methodology, or its deployment context. Algorithmic liability is a distinct legal construct that DPAs were not built to contain.

Training data governance is a third gap. Data protection frameworks govern personal data collected from individuals. They say little about synthetic data, scraped public data, or data used to train foundation models where the causal chain between an individual’s data contribution and a model output is indirect. The NDPR and Kenya DPA were not designed for a world where personal data is one input into trillion-parameter models whose outputs affect people who never consented to anything.

The Cross-Border Compliance Pain

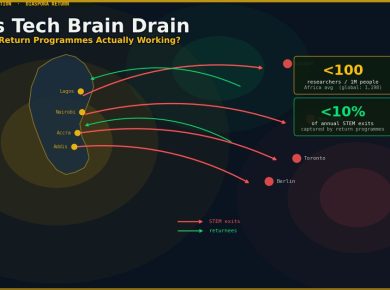

For African fintechs operating across multiple markets — a Nigerian neobank with East African operations, a Kenyan payment infrastructure provider serving West Africa — the DPA-as-AI-regulation patchwork creates compounding compliance complexity.

A Nigerian fintech operating an AI credit-scoring system must simultaneously manage NDPC oversight under the NDPA, prospective NITDA oversight under the AI Bill once it receives presidential assent, FSCA-style expectations in any South African subsidiary, and the Kenya AI Bill’s pre-deployment registration requirement once it passes the National Assembly. Each framework uses different terminology, different risk categories, and different enforcement channels.

The practical result: cross-border AI compliance is currently a manual, market-by-market exercise with no mutual recognition framework anywhere on the continent. Pan-African operators are effectively building compliance infrastructure five times over — once per jurisdiction — with no reuse across markets.

Is a Continental Standard Coming?

The African Union’s AI Continental Strategy, adopted in 2024, establishes goals for ethical AI, inclusive deployment, and continental competitiveness — but it is a framework document, not binding regulation. The AU has no enforcement mechanism. Continental AI governance standards would require implementation through national legislation in each member state, which returns the problem to exactly the starting point: most states have no national AI law to harmonise with.

The more likely near-term convergence mechanism is regulatory peer pressure. If Nigeria’s AI Bill receives assent and NITDA begins enforcing its registration and audit requirements, neighbouring markets will face a choice: align with Nigeria’s framework or create a regulatory arbitrage environment where AI systems are built in permissive jurisdictions and deployed into regulated ones. The ECOWAS digital trade agenda provides a vehicle for regional alignment, but no AI-specific initiative is currently on its active work programme.

In East Africa, the Kenya AI Bill — if passed — would create a similar pressure dynamic. The EAC’s digital economy framework has not addressed AI regulatory harmonisation; that gap will become more visible once Kenya has a functioning AI Commissioner and its neighbours don’t.

The Research Desk’s forthcoming analysis of Africa’s four dedicated AI governance frameworks — Nigeria, Kenya, South Africa, Morocco — will map the architecture of the jurisdictions that have acted. This piece addresses the larger universe: the 49 that haven’t, and the data protection patchwork holding the line in the meantime.

The Practical Position for Operators

For companies building AI products in Africa, the regulatory picture requires a market-by-market read rather than any continental shorthand.

In Nigeria: the AI Bill is almost certainly becoming law this quarter. Prepare for NITDA registration, impact assessment requirements, and NDPC interaction on data governance. The compliance window is narrowing.

In Kenya: the AI Bill is in Senate committee. Monitor the committee report, expected Q2 2026. Begin mapping AI systems against the bill’s risk classification framework now. The pre-deployment registration requirement is the operational constraint that matters most for fintech and health AI deployers.

In South Africa: POPIA Section 71 is operative today. The AI Policy’s 60-day consultation window is open. The FSCA-PA joint report from November 2025 sets the financial sector’s current expectation baseline. Compliance teams should be documenting AI use cases and bias mitigation frameworks ahead of the 2027/2028 sector strategy cycle.

In Ghana, Senegal, Tanzania, Uganda, and most of the continent: data protection law applies where personal data is processed. Consent architecture, purpose limitation, and data minimisation requirements constrain what AI systems can lawfully be trained on. That is a real but incomplete regulatory environment — and it is what exists until parliaments act.

The honest assessment: for most of Africa, AI is being regulated by accident. Data protection laws are doing their best. The jurisdictions that build dedicated AI frameworks first will set the standards that spread — by adoption, by regional pressure, or simply by operating as the markets too large to ignore.