Nigeria’s AI Bill is the most detailed AI governance text on the African continent. It classifies AI systems by risk tier, mandates annual audits for high-risk deployments, requires formal licences for operators in critical sectors, and places enforcement authority in NITDA. When signed, it will give Nigeria a statutory framework that most African governments do not yet have. What it does not do is mention elections.

That omission matters more than it might appear. The 2027 presidential election cycle has effectively already begun. Deepfake audio clips attributed to opposition figures are circulating on WhatsApp. AI-generated campaign content is appearing on social media feeds. Coordinated inauthentic behaviour networks — automated accounts amplifying partisan narratives — were documented in Nigerian digital public discourse well before the last election. The regulatory gap between what the AI Bill covers and what AI-enabled electoral interference actually looks like is wide, and closing it will require more than good intentions before 2027.

What the Risk-Tier Framework Does — and Does Not — Cover

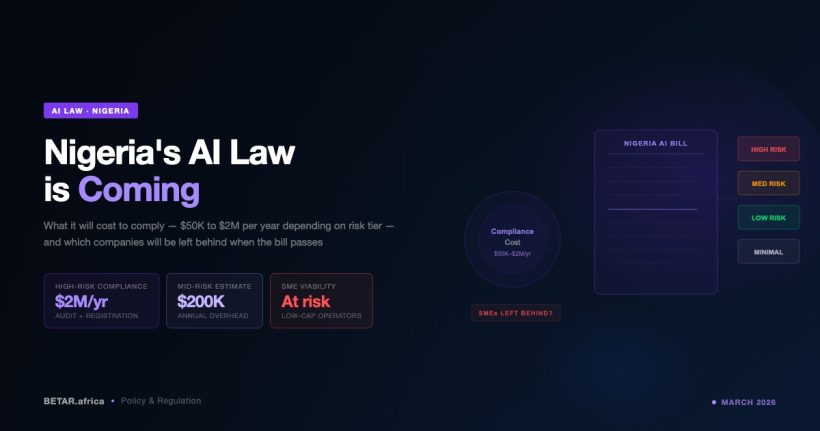

The AI Bill’s risk classification framework is its centrepiece. AI systems deployed in public administration, automated decision-making affecting legal rights, and critical infrastructure are classified as high-risk — the category that triggers mandatory annual audits, formal licensing requirements, and NITDA oversight. High-risk operators must demonstrate compliance before deployment, not after harm has occurred.

Within that framework, some electoral AI applications are covered. INEC operates automated systems for voter registration verification, biometric matching, and results transmission. Each of those, if challenged under the AI Bill’s classification criteria, could plausibly qualify as public administration AI or AI involved in automated decision-making with consequential outcomes. A legal argument exists that INEC’s core technology infrastructure falls under the high-risk tier.

But the AI applications most directly driving electoral interference do not. Deepfakes — synthetic video or audio fabricating statements by candidates or officials — are not classified anywhere in the Bill’s risk framework. The legislation addresses AI systems operated by entities within Nigeria’s regulatory perimeter: companies, state institutions, licensed operators. A deepfake produced outside Nigeria, or by individuals rather than regulated entities, falls outside the Bill’s enforcement scope entirely. The same is true for AI-generated political advertising and coordinated inauthentic behaviour networks, which are primarily platform-moderation problems rather than operator-licensing problems.

INEC’s Readiness Gap

If the AI Bill does classify INEC’s systems as high-risk, the electoral commission faces an audit requirement it has not prepared for. INEC has no published AI governance policy, no dedicated AI risk unit, and no framework for evaluating the systems it already operates against an audit standard. The commission’s 2022 technology deployment — the Bimodal Voter Accreditation System and IREV results transmission platform — generated significant controversy around reliability and transparency. The underlying question in that controversy — whether voters could trust AI-mediated electoral systems — is precisely the question a proper AI audit would address.

Under the AI Bill as drafted, the timeline for INEC compliance is not specifically set for electoral bodies. The broader implementation schedule gives regulated entities a period to come into conformity after the Bill takes effect. Given that the 2027 presidential election falls within a plausible implementation window, the commission could in theory be publishing its first AI audit around the same time it is managing one of Nigeria’s most contested electoral cycles. That is not a comfortable position.

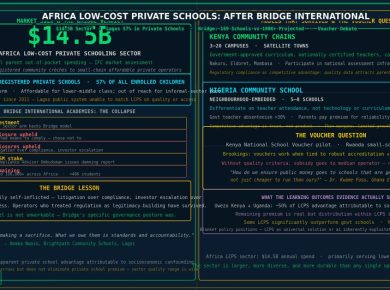

Beyond INEC’s own systems, there is the question of what obligations the Bill places on political actors. Campaign operations that deploy AI for targeting, content generation, or voter suppression tactics are not addressed in the current text. Political advertising AI — micro-targeted messaging built on voter data — does not fit cleanly into any of the Bill’s existing categories. The risk framework was designed primarily with commercial and public-sector deployments in mind, not the grey zone of political communication.

What a Stronger Framework Would Require

Closing the electoral blind spot would require additions to the AI Bill that the current draft does not contain. Three provisions would make the framework meaningfully applicable to election integrity.

First, explicit classification of AI-generated synthetic electoral content — deepfakes of candidates, fabricated audio, AI-generated campaign material — as a category requiring disclosure. Several jurisdictions, including the European Union under its AI Act, require labelling of AI-generated political content. Nigeria does not need to copy that framework wholesale, but a disclosure obligation for synthetic electoral content would be directly actionable by INEC and the National Broadcasting Commission.

Second, a platform accountability provision. The most consequential electoral AI harms are distributed through social platforms — Meta, TikTok, X, YouTube. The AI Bill does not currently establish clear obligations for platforms hosting AI-generated content in the Nigerian electoral context. COMESA’s recently launched probe into Meta’s WhatsApp AI integration signals that continental bodies are beginning to assert jurisdiction over platform AI. Nigeria’s AI Bill could go further by requiring platforms to report AI-generated electoral content at scale to INEC or a designated authority.

Third, a specific compliance deadline for INEC that precedes the electoral calendar. If the AI Bill requires INEC to audit its own systems, that audit should be complete — and publicly accessible — before voter registration for 2027 opens, not after.

The Kenya Comparison

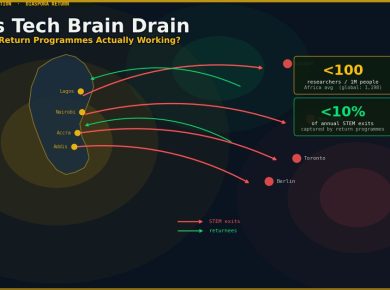

Kenya provides the most instructive continental precedent. The 2022 general election was the first major African election to see documented AI-generated disinformation campaigns at scale — fabricated audio clips attributed to senior political figures, synthetic social media profiles amplifying polarising narratives, and automated messaging targeting specific ethnic communities. The Independent Electoral and Boundaries Commission was not equipped to respond in real time. Post-election reviews identified the gap, but regulatory reform has been slow.

Kenya’s AI Bill, currently in draft, draws directly on those 2022 lessons. It includes specific provisions on AI use in electoral contexts, requires platform disclosure of synthetic political content, and gives the IEBC a formal role in AI governance coordination. Nigeria’s legislature could use that framework as a reference point during the current review period — the AI Bill has not yet been signed, which means there is still space to insert electoral-specific provisions.

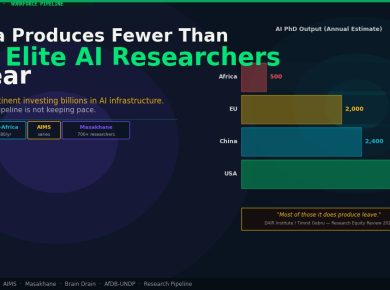

The window is not large. With the 2027 cycle effectively underway and the Bill expected to receive presidential assent by end of March, the time available for substantive amendment is measured in weeks, not months. Nigeria has built the most sophisticated AI governance text on the continent. The question is whether it can also become the first to make that framework election-ready before the next ballot is cast.

— Technology Reporter, BETAR.africa