African Data Labour Rights: Gig Workers, Military AI, and the Informed Consent Gap

Kenyan and Nigerian workers who labelled training data for AI systems did not know their work would feed U.S. military applications. An investigation into the consent gap at the heart of Africa’s AI data economy.

When Hassan took a data labelling job on Appen’s crowdsourcing platform in Nairobi, he believed he was helping train commercial technology — voice assistants, product recommendation systems, the kind of AI that powers a smartphone app. What he did not know, and was never told, was that his work would also contribute to AI systems used by the United States Department of Defense.

“If they had said it was for the military, I would have thought about it differently,” Hassan told Rest of World in February 2026, in an investigation published jointly with The Bureau of Investigative Journalism. “I didn’t even know that was possible.”

Hassan is one of thousands of African workers — the majority concentrated in Kenya and Nigeria — who have participated in AI training data pipelines that flow, through a series of commercial sub-licences, into some of the most sensitive military AI applications in the world. An investigation by BETAR.africa into the Africa-specific dimensions of this story finds that the informed consent gap is not merely an oversight. It is structural, legally unresolved, and actively exploited by the economics of global AI development.

The Scale of Africa’s AI Data Workforce

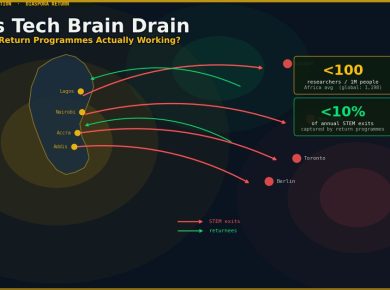

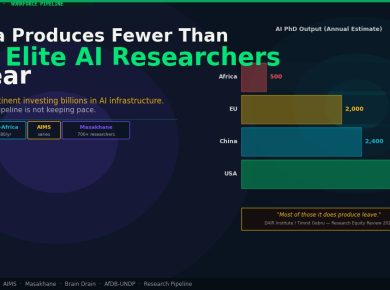

Appen, the Australian-listed AI training data company, maintains contractor networks in more than 180 countries. Kenya and Nigeria are among its largest African markets, offering a combination of English fluency, high unemployment among educated young people, and an established gig economy infrastructure built on mobile money platforms.

The work itself is meticulous and invisible. Workers tag objects in photographs, transcribe audio, assess the relevance of search results, and evaluate AI-generated text for accuracy and coherence. The pay ranges from $2 to $9 per hour for most African workers — a rate that competes poorly with comparable work in the United States or Western Europe, but attracts a steady supply of labour in Nairobi and Lagos.

What workers are rarely told is where that labelled data ultimately goes. Appen sells processed training datasets to clients including technology companies, government agencies, and through intermediaries, to defence contractors. The TBIJ/Rest of World investigation documented specific contracts connecting Appen’s data pipeline to U.S. DoD AI programmes. For the African workers whose labour populated those datasets, this was news.

“I thought I was helping build something useful,” said Ismail, a Lagos-based data labeller who worked on Appen projects for eighteen months. “Not weapons. Not surveillance.”

The Legal Framework — and Its Gaps

Both Kenya and Nigeria have data protection legislation that, on paper, should prevent exactly this kind of uninformed downstream use. The gap lies in enforcement capacity and cross-border visibility.

Kenya’s Data Protection Act 2019 defines valid consent in Section 30 as freely given, specific, informed, and unambiguous. Critically, Section 30(b) requires that the specific purpose of processing be disclosed at the point of collection. Appen contractor agreements that disclose “AI training” as the purpose, without specifying that downstream uses may include military and government applications, likely violate this standard for Kenyan workers. Section 33 imposes a higher threshold — separate explicit consent — for sensitive data categories including biometric data and voice samples, which are common in AI training data collection.

Nigeria’s Data Protection Act 2023, enacted in June 2023 and operationalised through the newly established Nigeria Data Protection Commission, contains similar protections. Sections 2.2 and 2.3 require that the processing purpose be clearly specified at the point of data collection, and that fresh consent be obtained for any material change of purpose. The commercial re-licensing of training data from a civilian AI application to a military one is precisely such a change — and no evidence has emerged that Nigerian workers were ever notified of it.

“The consent standards exist,” said Dr. Isaac Rutenberg, Director of the Centre for Intellectual Property and Information Technology Law at Strathmore University in Nairobi. “The problem is that enforcement jurisdiction stops at the border. Once data leaves Kenya as a packaged training dataset, the ODPC has no mechanism to track how it is sub-licensed or to whom.”

The Office of the Data Protection Commissioner of Kenya — the regulatory body created under the 2019 Act — has not issued specific guidance on AI training data sub-licensing practices. Nigeria’s Data Protection Commission, established under the 2023 Act, likewise has no enforcement precedent on cross-border re-licensing of AI training data to foreign government or military clients.

The accountability gap is, in effect, built into the architecture of global AI supply chains. African workers are at the base of those chains. African regulators have no visibility into what happens at the top.

The Worker Perspective

Joan Kinyua, a coordinator with the Data Labellers Association of Kenya, describes the informed consent problem as both a legal issue and a labour rights issue. “When you are a piece worker, you have no bargaining power,” she said in February 2026. “You accept the terms or you don’t work. Nobody explains what the terms mean.”

A 2025 survey by Equidem, a labour rights research organisation, documented that a significant proportion of African AI training data workers report psychological harm from exposure to disturbing content — violence, extremist material, graphic imagery — that they were not warned they would encounter. The absence of informed consent about content type is, in miniature, the same structural problem as the absence of informed consent about downstream military uses: workers are kept ignorant as a feature, not a bug, of the business model.

Ladi Anzaki Olubunmi, a Nigerian worker who documented her experience with AI data labelling in March 2025, described receiving tasks involving content she found deeply disturbing, with no prior warning and no accessible mechanism to raise concerns. Her account, which circulated widely in Nigerian tech communities, is among the earliest documented cases of an African data labeller publicly naming the harm.

The Continental Standard — Not Yet Binding

The African Union’s Data Policy Framework, adopted in 2022, contains provisions specifically addressing data worker rights. Article 9 calls for disclosure, transparency, and fair compensation for African workers whose data contributions are used in AI training. It is, however, a policy framework — not binding law — and has not been domesticated into any national legislation on the continent.

Civil society organisations working on digital rights in both Kenya and Nigeria have pointed to the AU framework as the appropriate vehicle for a continent-wide standard. “The individual country regulators cannot solve this alone,” said a researcher at Paradigm Initiative, a Nigerian digital rights organisation. “The data leaves Kenya as a local employment arrangement and arrives in Washington as a defence asset. You need continental-level rules to address that gap.”

What Accountability Should Look Like

The minimum steps that would bring current practices into compliance with existing law are straightforward in principle: data collection agreements should disclose all categories of downstream use at the point of contracting, including government and military use. Workers who provided data under contracts that did not disclose such uses should be notified retroactively. The ODPC and NDPC should issue specific guidance on AI training data sub-licensing disclosure obligations.

More structurally, Rutenberg argues, the solution requires a supply chain transparency register — an auditable record of how training data collected from African workers flows through commercial re-licensing arrangements. “You have supply chain transparency requirements in sectors like minerals and garments,” he said. “There is no principled reason not to apply the same logic to AI training data.”

The third tier of accountability is continental: elevating the AU Data Policy Framework’s Article 9 provisions into binding law, whether through AU member state domestication or through a dedicated continental instrument on AI data labour rights.

For Hassan in Nairobi and Ismail in Lagos, these are questions of political economy that feel very far from the daily reality of clicking through image labels at $4 an hour. But the policy conversation has, at least, begun. The question is whether the momentum outlasts the news cycle.

Appen was contacted on 10 March 2026 with specific written questions regarding its contractor networks in Kenya and Nigeria, informed consent practices, and compliance with the Kenya Data Protection Act 2019 and Nigeria Data Protection Act 2023. Appen did not respond to requests for comment by the publication deadline of 12 March 2026. This article will be updated if Appen responds.

The Kenya Ministry of Labour and Social Protection and the Nigeria Labour Congress were also contacted with written questions. Responses are expected by 17 March 2026 and will be incorporated into a subsequent update of this article if received.