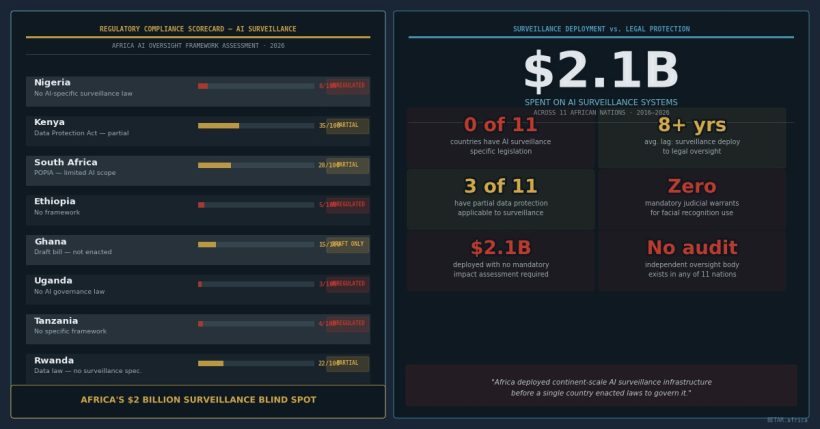

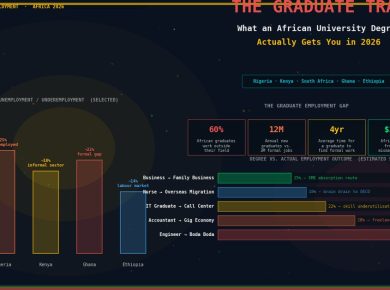

Africa’s data protection laws were designed to constrain surveillance. Most of them contain a clause that makes that constraint inapplicable to the state. The national security exemption — standard language in data protection frameworks from Nigeria to South Africa to Kenya — carves out government surveillance activities from the very protections that the legislation was meant to guarantee. When that exemption is combined with the $2 billion in AI surveillance infrastructure that eleven African countries have acquired from Chinese vendors, the result is a legal architecture that authorises, by omission, exactly what it formally prohibits.

This is the deeper regulatory story behind the March 2026 joint investigation by the Institute of Development Studies and the African Digital Rights Network, which first surfaced the scale of Chinese AI surveillance spending across the continent. The infrastructure story — Huawei, ZTE, $470 million for Nigeria alone — was covered by BETAR in a companion analysis on the infrastructure and vendor accountability dimensions. The legal story is structurally different and, for the citizens and civil society organisations trying to challenge these deployments, more consequential.

Nine African countries with operational AI surveillance systems have enacted data protection laws. In every case, those laws include a national security or public order exemption broad enough to render the legislation inoperative for state surveillance activities. The exemption does not swallow the rule by accident. It is a deliberate design choice — one that reflects both the political economy of African security establishments and the weakness of constitutional oversight frameworks relative to executive power.

The Exemption That Swallows the Rule

Data protection law operates on a basic principle: the processing of personal data requires a lawful basis. Consent, legitimate interest, contractual necessity, and legal obligation are the standard categories. State surveillance systems — smart cameras, facial recognition platforms, behavioural analytics networks — process vast quantities of personal data. Under a functioning data protection framework, that processing would require a documented lawful basis, subject to challenge and review.

The national security exemption removes state surveillance activities from that accountability structure entirely. Nigeria’s Data Protection Act (2023) — arguably the most modern comprehensive framework on the continent — exempts processing undertaken in the interests of national security or public safety. Kenya’s Data Protection Act (2019) contains a substantially similar carve-out. South Africa’s Protection of Personal Information Act (POPIA) exempts processing by the state “in the interest of national security.” In no case does the legislation define what constitutes a qualifying national security activity, establish a threshold test, require judicial authorisation, or create a mechanism for independent review of whether a claimed exemption is legitimate.

The practical consequence is that the same government deploying a Huawei facial recognition network across its capital cities can claim national security exemption for that entire operation — and no data protection regulator in any of these jurisdictions has the legal mandate to examine whether that claim is warranted. The Office of the Data Protection Commissioner in Kenya, the Nigeria Data Protection Commission, and South Africa’s Information Regulator are each expressly excluded from oversight of activities covered by national security exemptions. They cannot require disclosure. They cannot audit. They cannot investigate complaints.

The Country Regulatory Matrix

The gap between data protection law on paper and its application to state AI surveillance is consistent across the nine countries with both operational surveillance infrastructure and enacted data protection frameworks.

| Country | DPA Framework | State Surveillance Applicability | Independent Oversight Body | AI-Specific Surveillance Law |

|---|---|---|---|---|

| Nigeria | Nigeria Data Protection Act (2023) | Excluded — national security exemption (s.25) | Nigeria Data Protection Commission (no surveillance mandate) | None |

| Kenya | Data Protection Act (2019) | Excluded — national security exemption (s.51) | Office of the Data Protection Commissioner (no surveillance mandate) | None |

| South Africa | POPIA (2020) | Partial — RICA governs interception; AI surveillance ungoverned | Information Regulator (jurisdiction excludes national security) | None (AI policy in draft) |

| Uganda | Data Protection and Privacy Act (2019) | Excluded — broad public interest exemption | Personal Data Protection Office (no enforcement record) | None |

| Tanzania | Personal Data Protection Act (2022) | Excluded — national security and law enforcement exemptions | Personal Data Protection Commission (operational since 2023) | None |

| Ethiopia | None (draft Personal Data Protection Proclamation under review) | No framework applies | None | None |

| Egypt | Personal Data Protection Law No. 151 (2020) | Excluded — explicit state security exemption | Data Protection Centre (limited independence) | None |

| Zambia | Data Protection Act (2021) | Excluded — national security and public order exemptions | Zambia Information and Communications Technology Authority (dual function) | None |

| Zimbabwe | Data Protection Act (2021) | Excluded — broad public interest and state security exemptions | Postal and Telecommunications Regulatory Authority (no DPA enforcement track record) | None |

Source: BETAR.africa regulatory mapping, March 2026. Country DPA texts; ADRN country analysis.

South Africa is a partial exception in that the Regulation of Interception of Communications and Provision of Communication-Related Information Act (RICA) does establish some procedural requirements for electronic surveillance, including judicial authorisation for interception. But RICA was designed for communications interception, not AI-powered video surveillance, facial recognition, or behavioural analytics. It has not been interpreted or applied to the smart city infrastructure that has been expanding in South African municipalities since 2020. The gap between RICA’s scope and current surveillance practice is wide enough to drive the entire Huawei safe-city deployment through it.

The Audit Vacuum

Even where data protection regulators have formal jurisdiction over some government data processing activities, none has the technical capacity to assess AI surveillance systems. An audit of a facial recognition platform deployed across a major African city requires expertise in machine learning systems, biometric data processing, algorithmic decision-making, and cross-border data transfer architecture. No African data protection authority has staff with that combination of skills. Several have limited analytical capacity even for conventional data processing audits.

This is not a budget problem that an injection of resources would solve in the short term. Building genuine technical audit capacity for AI systems requires years of recruitment, training, and institutional knowledge development. The Collaboration on International ICT Policy in East and Southern Africa (CIPESA) documented in its 2025 annual surveillance report that across the nine countries with AI surveillance deployments, zero independent technical audits of those systems have been conducted by any government body. The systems are running. No one with the mandate, the access, or the technical skills is examining what they are actually doing.

The audit vacuum creates a secondary problem: there is no reliable baseline. Governments do not know — or will not disclose — the error rates of their facial recognition systems, the scope of data sharing with Chinese vendors, the retention periods for biometric data collected, or the extent of cross-border data flows to systems maintained in China. Civil society organisations have attempted to obtain this information through freedom of information mechanisms in Kenya, South Africa, and Nigeria. None has received substantive responses.

The Human Rights Dimension

The legal gap has documented human rights consequences. CIPESA’s monitoring across East and Southern Africa identified cases in Uganda, Tanzania, and Zimbabwe where AI surveillance systems were used to track journalists, political opposition figures, and civil society activists. Paradigm Initiative’s 2025 Digital Rights in Africa report documented a chilling effect on civil society in all three countries: respondents self-censored public activities, avoided identified surveillance zones, and restricted digital communications because of the perceived reach of surveillance systems whose actual capabilities they could not assess.

These are not hypothetical risks in jurisdictions where AI surveillance is a distant prospect. They are documented outcomes in countries where the infrastructure has been operational for three to seven years. The national security exemption that removes these systems from data protection law also removes the most straightforward legal mechanism through which affected individuals could seek redress. Constitutional litigation — the alternative — is expensive, slow, and contingent on judicial independence that varies significantly across the nine-country matrix.

The AU’s Special Rapporteur on Freedom of Expression and Access to Information has raised concerns about surveillance and digital rights in several reports since 2021. None of those reports has prompted member state action to narrow national security exemptions or extend data protection law to government AI surveillance systems.

The AU Governance Gap

The African Union has three instruments with potential relevance to AI surveillance governance: the Malabo Convention (adopted 2014, in force 2023, 15 ratifications), the Data Policy Framework (2022, updated 2025–26), and the AU AI Continental Strategy (2024). None of them addresses AI-powered state surveillance as a specific domain requiring governance.

The Malabo Convention establishes baseline data protection principles and addresses cybercrime, including unauthorised interception of data. But its surveillance provisions were drafted in 2014, before facial recognition platforms and behavioural analytics systems were practical tools for African governments. It cannot be interpreted to cover systems that did not exist when it was written — and no member state has proposed a protocol to extend it.

The AU Data Policy Framework sets out principles for data governance but is explicitly non-binding. Its 2025–26 update added provisions on cross-border data flows and AI, but the working group that drafted the update did not include surveillance governance in its mandate. The AU AI Continental Strategy focuses on AI’s developmental applications — agriculture, healthcare, education — and is silent on surveillance.

The diplomatic constraint is structural. The member states most invested in Chinese surveillance infrastructure include several that have deployed those systems against political opponents. They are not neutral parties in a debate about binding surveillance governance frameworks. And the AU’s consensus-based decision-making model means that binding instruments require agreement from precisely those governments that have the most to lose from effective oversight.

A Reform Pathway That Requires Political Will

Three reforms would materially change the legal landscape. None of them is technically difficult. All of them require political will that is currently absent at both the country and continental levels.

First, targeted surveillance legislation — specific laws governing AI-powered surveillance that establish a lawful basis requirement, algorithmic impact assessment obligations, proportionality standards, and judicial authorisation mechanisms — would close the exemption loophole more effectively than amending data protection frameworks. Several European jurisdictions have moved in this direction; no African country has tabled a bill of this type.

Second, independent technical audit capacity — whether established within existing data protection authorities, through a regional body, or through a specialised multi-country mechanism — would create the baseline assessment capability that is currently entirely absent. The AU’s Digital Transformation Strategy and the GSMA’s mobile network security work provide models for multi-country technical cooperation that could be adapted. Funding for this capacity could come from the same DFI community — the African Development Bank, SIDA, the European Investment Bank — that has been financing digital infrastructure across the continent.

Third, and most fundamentally, blanket national security exemptions in data protection legislation need to be replaced with scoped carve-outs that establish threshold tests, require ministerial or judicial authorisation, and are subject to independent review. This is not an unprecedented ask: the United Kingdom’s Investigatory Powers Act (2016), for all its flaws, at least establishes a mechanism through which surveillance authorisations can be reviewed. African data protection frameworks are currently less protective than a 2016 UK statute — in countries whose governments are deploying more extensive surveillance infrastructure.

The exemption architecture that renders Africa’s data protection laws inapplicable to the continent’s most significant data processing operations is not a regulatory failure. It is a design choice. Reversing it requires acknowledging that choice and making a different one. So far, no government has shown any intention of doing so.

This analysis is a companion to BETAR’s coverage of the financial and infrastructure dimensions of Africa’s AI surveillance spending, examining the $2 billion in Chinese vendor contracts across eleven African countries and the accountability gap in procurement oversight.