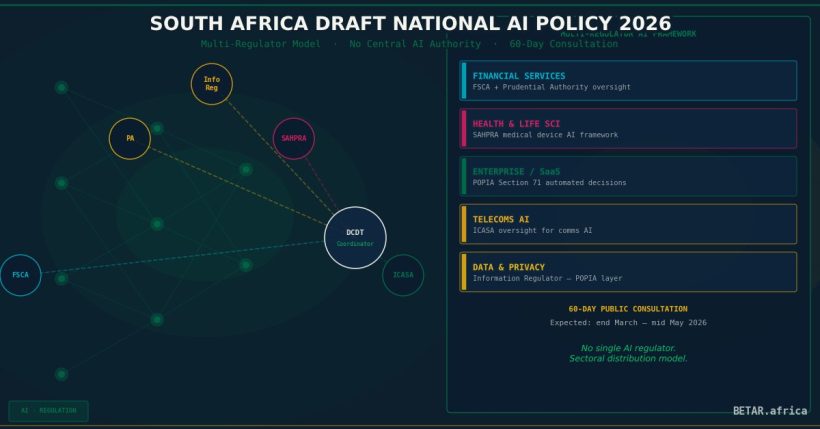

South Africa has chosen a path that sets it apart from every other African nation attempting to govern artificial intelligence: rather than creating a new AI authority, the country is embedding AI oversight inside the regulators that already govern financial services, healthcare, and telecommunications.

The Department of Communications and Digital Technologies (DCDT) briefed Parliament on the Draft National AI Policy on February 24, 2026. The policy is advancing toward Cabinet approval and is expected to be gazetted for a 60-day public consultation in March 2026 — a window that closes sometime around mid-May. For businesses operating in South Africa, this consultation is the last meaningful opportunity to shape how AI liability and oversight will work in Africa’s most developed economy.

How the Multi-Regulator Model Works

The centrepiece of South Africa’s approach is sectoral distribution. There will be no South African AI Agency, no single Commissioner, no centralised licensing desk. Instead:

– Financial services AI will be overseen by the Financial Sector Conduct Authority (FSCA) and the Prudential Authority (PA), building on existing frameworks under FAIS and the Financial Markets Act.

– Health AI — diagnostic tools, clinical decision support, patient data applications — will fall under the South African Health Products Regulatory Authority (SAHPRA) and the Health Professions Council of South Africa (HPCSA).

– Telecoms and communications AI will be regulated through the Independent Communications Authority of South Africa (ICASA).

– Data-heavy AI applications will continue to intersect with the Information Regulator, which administers the Protection of Personal Information Act (POPIA).

The policy designates the DCDT as coordinator — a convening body rather than an authority — responsible for cross-sectoral alignment and international AI governance engagement.

For companies deploying AI across multiple sectors (a fintech using AI for credit scoring and health insurance, for instance), this creates a multi-regulator compliance reality. There will be no one-stop desk.

The Business Compliance Landscape

Financial Services

Firms using AI for credit origination, fraud detection, algorithmic trading, or insurance underwriting will face the most immediate compliance exposure. The FSCA has already signalled interest in AI explainability requirements — i.e., the ability to demonstrate why an AI system reached a particular decision — aligned with its Treating Customers Fairly (TCF) framework.

The Prudential Authority is expected to address model risk management for AI systems embedded in banking operations, likely through amendments to existing model risk guidance under Basel III implementation.

Action required: Financial sector compliance teams should begin mapping AI systems to existing FSCA/PA notification and approval requirements now, ahead of any formal AI-specific guidance that emerges post-consultation.

Health and Life Sciences

AI in clinical settings — including diagnostic imaging, drug interaction checks, and remote patient monitoring — is already subject to SAHPRA’s medical device framework. The AI Policy is expected to tighten classification requirements for AI-enabled medical devices and may introduce specific clinical validation standards.

Companies that have deployed AI health tools under current SAHPRA guidance should review whether reclassification risk applies under the new framework.

Cloud, SaaS, and Enterprise Technology

Enterprise software companies deploying AI features into South African customers’ workflows face a fragmented compliance picture. An HR platform with AI-powered candidate screening, for example, will intersect with the Information Regulator (POPIA, automated decision-making provisions) and potentially with sector regulators if the employer is in financial services or health.

POPIA’s Section 71 — which grants data subjects the right not to be subject to decisions based solely on automated processing — is already operative and is expected to be reinforced, not replaced, by the AI Policy framework.

South Africa vs. Nigeria: Two Models for Africa’s Two Largest Economies

The contrast with Nigeria’s National Artificial Intelligence Strategy and the National Artificial Intelligence Development Agency (NAIDA) Bill 2026 is instructive.

Nigeria is pursuing a centralised model: a dedicated NAIDA would be responsible for AI licensing, safety assessments, and enforcement across sectors. The Bill proposes a mandatory pre-deployment registration regime for high-risk AI systems.

South Africa is doing the opposite — and doing it deliberately. The DCDT has explicitly noted that a single AI regulator risks creating bureaucratic bottlenecks that slow adoption. South Africa’s model bets that sector regulators already understand the domain risks better than any generalist AI authority could.

The trade-off is predictability. Businesses operating in Nigeria will, eventually, have a single contact point for AI compliance. Businesses operating in South Africa will need to navigate multiple regulators, each moving at different speeds with different enforcement cultures.

For multinationals and pan-African operators deploying AI across both markets, this divergence creates a bifurcated compliance architecture. Legal and compliance teams should begin mapping which AI systems are deployed in which jurisdictions now.

The 60-Day Consultation: What’s at Stake

When the Draft National AI Policy is gazetted, businesses will have 60 days to submit formal representations. Based on the February 24 parliamentary briefing and the policy’s trajectory, the consultation is expected to open before the end of March 2026 and close by mid-May 2026.

Key questions that remain unresolved in the current draft — and that the consultation should address — include:

1. High-risk AI classification thresholds. The policy refers to high-risk AI but does not yet define thresholds with the specificity of the EU AI Act. Industry should push for clear, sector-specific definitions.

2. Cross-border AI liability. When an AI system is developed abroad and deployed in South Africa, who bears liability — the developer, the deployer, or the South African business customer? Current draft language is ambiguous.

3. AI audit and explainability standards. The financial and health sectors will likely face mandatory audit requirements, but the technical standards for those audits are not yet specified. Industry bodies have an opportunity to shape these before they calcify into regulation.

4. SME and startup carve-outs. South Africa’s technology ecosystem is dominated by a mix of large financial institutions and smaller startups. The consultation is an opportunity to advocate for tiered compliance obligations that don’t impose enterprise-scale AI governance requirements on seed-stage companies.

What Businesses Should Do Now

Ahead of gazette (before end of March 2026):

– Brief your compliance and legal teams on the multi-regulator model and identify which sector regulators apply to each AI application in your stack.

– Review POPIA Section 71 compliance — automated decision-making obligations are already live.

– Identify trade associations or industry bodies (FinTech South Africa, BUSA, SAIA) through which you may coordinate a joint submission.

During the 60-day consultation window:

– Submit formal representations on high-risk classification thresholds, cross-border liability, and technical audit standards.

– Engage DCDT directly if you operate AI systems that cross multiple sector boundaries — the coordinator function is specifically designed to handle these cases.

– Monitor parallel developments at the FSCA and PA, which may issue AI-specific guidance notes concurrent with or shortly after the consultation.

Longer-term planning:

– For companies operating across South Africa and Nigeria, begin building a dual-jurisdiction AI compliance matrix. The regulatory gap between these two approaches will persist for at least two to three years.

– Track AU Digital Transformation Strategy alignment — South Africa participates in AU AI governance working groups, and continental standards may eventually influence the domestic framework.

The Bottom Line

South Africa’s Draft National AI Policy represents a pragmatic, business-aware approach to AI governance — one that avoids the risk of regulatory capture by a single new authority and builds on regulators with existing domain expertise. But pragmatism has a cost: without a single coordinating authority with enforcement teeth, AI governance will be uneven across sectors and slow to respond to cross-sectoral risks.

The 60-day consultation, once gazetted, is not a formality. It is the design phase. Businesses with material AI exposure in South Africa — especially in financial services, health, and enterprise SaaS — should treat their consultation submissions as operational investments, not compliance exercises.

BETAR.africa tracks regulatory developments across all 54 African nations. This analysis is based on public statements, parliamentary briefings, and law firm client advisories available as of March 2026. The Draft National AI Policy had not been gazetted at time of publication. Monitor the DCDT Government Gazette notices for the official consultation opening.