From Cape Town to Nairobi, Africa’s universities are navigating a three-way collision between AI tools embedded in student workflows, academic integrity systems that were not built for this moment, and the structural inequality that ensures the same technology challenge lands differently on different campuses.

At the University of Cape Town, the Senate Teaching and Learning Committee made a quiet but significant decision in the second half of 2025: it would no longer use the AI detection score in Turnitin. The change took effect on October 1. The committee’s reasoning was not principled tolerance of AI-generated work; it was the recognition that the tool did not work well enough to be trusted — and that the damage from false accusation outweighed the utility of automated detection.

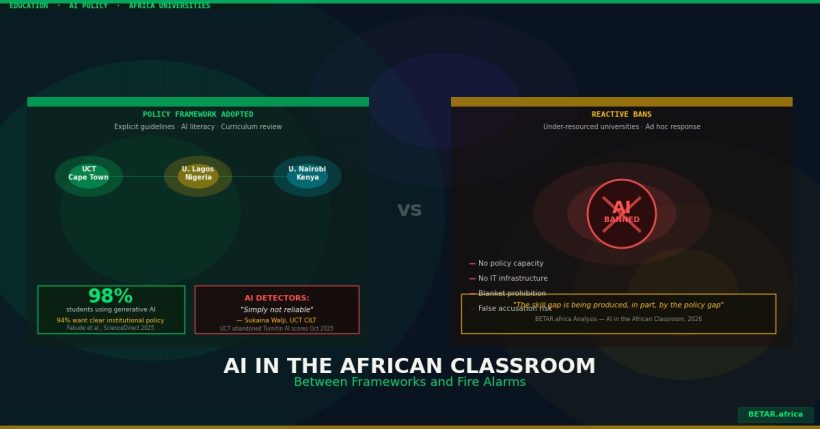

That decision — taken at one of Africa’s best-resourced universities, after a formal institutional review — illustrates the complexity of the AI question in African higher education in ways that the usual “ban or allow” framing does not capture. What is happening on campuses across the continent is not a single story. It is a series of institutional responses to the same disruption, stratified by resource, region, and the willingness of leadership to move beyond reflexive prohibition.

The Divergence in Institutional Response

UCT’s response is the most developed on the continent. The university published a formal “UCT Framework for AI in Education” — endorsed by the Senate Teaching and Learning Committee in June 2025 — that provides explicit guidance on the responsible use of AI in teaching, assessment, and research. The framework covers AI literacy promotion for staff and students, curriculum review to accommodate AI across disciplines, and a reassessment of assessment integrity in an AI-mediated environment. The explicit abandonment of AI detection scoring reflects a specific finding: the tools are unreliable, especially for students who are not writing in standard Western academic English.

Sukaina Walji, director of UCT’s Centre for Innovation in Learning and Teaching, was direct about the failure mode. “We are finding a number of false positives, where students have been accused of using AI when in fact they haven’t, but also false negatives where AI text is not being detected,” she said. “AI detectors are simply not reliable. There are no magic solutions.” Walji noted that such inaccuracies undermine trust between staff and students — “which is detrimental to the teaching and learning environment.”

This is more consequential for African institutions than it is for their counterparts in Europe or North America. AI detection tools are trained predominantly on US and UK English-language text corpora. They detect AI writing by identifying patterns — sentence-level predictability, statistical regularity, syntactic uniformity — that may also be present in the academic writing of non-native English speakers, multilingual writers, and students whose prose reflects African English linguistic patterns. The research evidence on false-positive rates for African academic writing is still limited, but the direction is clear: a tool calibrated on Harvard and Oxford submissions will not transfer cleanly to Accra or Nairobi.

At the opposite end of the response spectrum, many of Africa’s under-resourced public universities have moved in the other direction. Without the institutional capacity to develop nuanced AI frameworks — or the IT infrastructure to deploy and manage AI literacy programmes — administrators have defaulted to blanket prohibitions. Students may not use AI. Work identified as AI-generated will be failed or referred for misconduct proceedings. These are policies written in the absence of policy capacity, and they impose the highest compliance burden on the students least likely to be cheating — those whose writing patterns happen to resemble AI output.

Who Is Building AI Literacy

The curriculum reform story is younger and thinner than the integrity debate, but it exists.

The University of Lagos became the first African institution to host an OpenAI Academy, a capacity-building partnership designed to integrate AI literacy into undergraduate and postgraduate education. The initiative is notable for its institutional signal: a major Nigerian university, in a formal partnership with the world’s most prominent AI developer, treating AI not as a threat to academic integrity but as a literacy domain to be taught.

In Kenya, the University of Nairobi and the Jomo Kenyatta University of Agriculture and Technology have each moved to integrate AI tools into research and postgraduate supervision workflows, aligned with the draft Kenya National AI Strategy 2025-2030 that the government released for public consultation. The strategy explicitly identifies higher education AI integration as a national economic priority — framing universities as AI workforce pipeline builders, not just passive recipients of technology disruption.

South Africa’s university sector, led by UCT’s published framework, is the most institutionally advanced in terms of policy articulation. But the research literature surfaces a persistent gap: in a June 2025 survey of 102 master’s students in engineering management at a South African university — one of the most methodologically detailed studies of this cohort to date — 98 percent of respondents reported actively using generative AI, and 94 percent said they needed clear institutional policies to guide its ethical use. The tools are already embedded in the workflow. The policies are catching up.

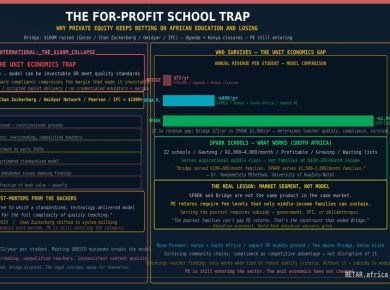

The Equity Fault Line

The most consequential dimension of the AI-in-universities story — and the least-discussed one — is not plagiarism. It is access asymmetry.

UCT students receive a clear institutional framework, AI literacy support, and a formal position on detection tools that protects them from false accusation. Students at an under-resourced polytechnic in northern Nigeria receive a blanket ban issued by administrators who have neither the tools, the training, nor the budget to manage anything more sophisticated. Both cohorts are using ChatGPT. Only one cohort is being supported in using it ethically, effectively, and in ways that will translate into professional AI competence.

The consequences compound. Africa’s employers — including the technology companies, consultancies, and financial services firms that are the primary destinations for university graduates in the formal economy — are integrating AI into their workflows at pace. The graduate who spent four years at a university where AI was banned arrives into a working environment where AI fluency is assumed. The graduate who spent four years learning when and how to use AI productively arrives with a genuine competitive advantage.

UNESCO’s analysis of AI in African education systems flags this as a structural equity risk: not just between Africa and the developed world, but within Africa, between institutions that have the capacity to respond thoughtfully and those that do not. The “cultural cost of AI” in education is not only about content — whose knowledge is embedded in AI training data, whose languages are represented — though that dimension matters. It is also about which students are equipped to operate in the AI economy and which are systematically denied that preparation by the institutions that were supposed to equip them.

What Thoughtful Response Actually Requires

The universities doing this well share a pattern: they have made explicit policy decisions rather than implicit bans, published those decisions where students and faculty can find them, and connected AI policy to curriculum review rather than treating it as a purely disciplinary question.

That pattern requires institutional capacity — senior leadership attention, time from faculty who are already under-resourced, and a culture of policy deliberation that many African universities have not historically prioritised. It also requires honesty about what detection tools can and cannot do — a conversation that most institutions are not yet having publicly, even though the academic integrity officers managing the systems know the limitations.

The bootcamp and alternative training sector has moved faster. ALX Africa’s curriculum is explicitly AI-integrated. Moringa School’s updated programmes assume AI tool proficiency as a baseline, not a prohibited shortcut. The formal university sector, which trains the majority of Africa’s professional workforce, is moving more slowly and more unevenly.

That gap — between the alternative training pipeline and the formal university system — is not new. It is the same gap that BETAR’s AI Skills Gap analysis documented in early 2026, the same gap that the EdTech investment landscape is scrambling to fill. What the AI-in-classrooms story adds is a specific mechanism: the institutions with the resources to adapt are adapting, and the institutions without them are falling further behind. The skill gap is being produced, in part, by the policy gap.

Sources: UCT Centre for Innovation in Learning and Teaching, “UCT Framework for AI in Education,” June 2025; UCT News, “Introducing UCT’s AI in Education Framework,” July 15, 2025; UCT News, “UCT scraps flawed AI detectors,” July 24, 2025 [Sukaina Walji quotes]; Fakude et al., “Artificial intelligence in South African higher education: Survey data of master’s level students,” ScienceDirect, June 2025 [source of 98%/94% statistics, n=102 engineering management master’s students]; Frontiers in Education, “Exploring ethical dilemmas and institutional challenges in AI adoption: South African universities,” 2025; IIEP-UNESCO/Discover Education, “Academic integrity in the age of AI: challenges and strategies for African higher education,” 2025; Pangram Labs, “The State of Academic Integrity and AI Detection 2025”; UNESCO, “The cultural cost of AI in Africa’s education systems”; UNICEF Innocenti, “How AI can transform Africa’s learning crisis,” 2025; Mastercard Foundation, “AI in Africa 2025” report; Kenya draft National AI Strategy 2025-2030; UNESCO-Eastern Africa Sub-Regional Forum, “Nairobi Statement on Artificial Intelligence and Emerging Technologies in Eastern Africa,” June 2024; BETAR.africa BETA-494 (Africa AI Skills Gap); BETA-585 (Microsoft Elevate Africa); BETA-738 (Africa EdTech Investment Q1 2026).