Kenya’s Artificial Intelligence Bill 2026 does something Nigeria’s landmark AI law deliberately avoided: it requires companies to get government approval before deploying high-risk AI systems. That single architectural choice — prior approval versus post-hoc enforcement — separates the two biggest tech ecosystems in sub-Saharan Africa and will define the compliance reality for startups across both markets for years to come.

The bill, introduced in Kenya’s Senate on February 19, 2026 by nominated Senator Karen Nyamu, proposes the most detailed AI governance structure yet seen anywhere on the continent. Three institutions would share regulatory authority: an Artificial Intelligence Commissioner with enforcement powers, an Artificial Intelligence Authority responsible for standards and strategy, and an Artificial Intelligence Advisory Council serving as an expert consultative body. A person deploying a high-risk AI system without the Commissioner’s sign-off would face fines of up to KES 5 million — approximately $38,000 at current exchange rates — and up to three years in prison.

Nigeria’s approach, encoded in the National Digital Economy and E-Governance Bill that cleared both chambers of the National Assembly earlier this year, puts NITDA — the National Information Technology Development Agency — at the centre of a single-regulator enforcement model. High-risk AI developers must classify their systems correctly and build to compliance standards from day one, but they do not need pre-deployment sign-off from any government body. NITDA inspects after the fact.

Both frameworks are risk-based. Both are overdue. But they are not the same, and the difference will shape how AI-native startups build, scale, and price their products across East and West Africa.

What Kenya’s Bill Actually Proposes

Under the Kenya bill’s three-tier governance structure, the AI Commissioner sits at the top of the enforcement pyramid — responsible for registering AI systems, conducting audits, investigating compliance breaches, and crucially, approving or rejecting applications to deploy high-risk AI. The AI Authority, a separate body, sets technical and ethical standards, manages regulatory sandboxes, and develops Kenya’s national AI strategy. The AI Advisory Council provides expert input but holds no enforcement mandate.

The bill’s definition of high-risk AI is expansive. Systems operating in healthcare, education, agriculture, finance, security, employment, and public administration all fall within its scope. In practice, this captures most of the AI-native products being built inside Nairobi’s tech ecosystem today. Agri-AI platforms serving smallholder farmers, credit scoring models at micro-lenders, AI-powered school assessment tools — all would require Commissioner registration and, where they affect individual rights or economic access, pre-deployment approval.

Citizens gain meaningful protections under the bill: the right to know how an AI system reached a decision that materially affects them, and the right to request a human review of automated determinations. All AI training and deployment must comply with Kenya’s Data Protection Act, 2019, creating an explicit link between AI governance and the existing data regulatory regime.

Penalties for harmful AI-generated content — deepfakes, synthetic disinformation — carry separate provisions: fines up to KES 5 million and up to two years in prison. The bill was introduced in the wake of manipulated AI-generated images of Senator Nyamu herself circulating online, a detail that underscores the political urgency that brought it to the Senate floor.

The Prior Approval Problem

The most consequential difference between Kenya’s framework and Nigeria’s is not the penalty structure or the governance architecture. It is the pre-deployment approval requirement.

Nigeria’s NITDA model demands compliance from the moment a high-risk AI system goes into production, but it does not require developers to seek government sign-off before launching. A fintech building a credit scoring model must classify it correctly, document it adequately, build an explainability layer, and stand ready for NITDA audit — but it can ship first and face inspection afterward. The burden is real, but the model preserves the build-fast pace that has defined Lagos’s fintech ascent.

Kenya’s Commissioner model inverts that sequence. Before a high-risk system can go live, it must pass through a government registration and approval process whose timelines the bill does not yet specify. For a startup racing to close a funding round or capture a market window, the introduction of a regulatory gate between “ready to ship” and “permitted to ship” is not a compliance cost — it is a product velocity constraint.

Legal advisory firm Cliffe Dekker Hofmeyr, in its March 18 analysis of the bill, acknowledged the innovation tension directly: “The AI Bill introduces a regulatory framework that seeks to protect users of AI tools without stifling Kenya’s culture of innovation.” The operative word is “seeks.” Whether the Commissioner’s approval queue can move fast enough to avoid becoming a bottleneck is an empirical question the bill leaves unanswered.

The Open-Source Audit Trail Problem

Both Kenya’s and Nigeria’s frameworks share a common technical blind spot: the assumption that AI developers know exactly how the models underlying their products were trained.

In practice, a significant portion of Nairobi’s and Lagos’s AI developer community does not build foundation models from scratch. They take open-source models — Meta’s Llama, Mistral, Falcon — fine-tune them on local datasets, and deploy them in production. The compliance obligations in both bills contemplate full audit trails of training data, model architecture, and decision logic. For a developer using a third-party open-source base model, producing that documentation is often structurally impossible. The audit trail does not exist because they were not there when the model was built.

This creates a compliance gap that neither government has yet addressed. Kenya’s pre-approval model arguably makes the gap more acute: if the Commissioner’s office requires training documentation as part of the registration process, a startup running a fine-tuned Llama deployment cannot comply — not because of negligence, but because the underlying data is held by Meta and not disclosed. HapaKenya‘s commentary on the bill flagged this as a threat to innovation, noting that requiring developers “to produce a full audit trail of how the underlying model was trained may simply not be possible” for open-source adaptations.

Where Kenya Gets It Right: Regulatory Sandboxes

One area where Kenya’s framework represents a genuine advance over Nigeria’s: regulatory sandboxes. The AI Authority would be explicitly mandated to establish and manage sandboxes allowing startups and developers to test AI products in a controlled environment with relaxed compliance requirements during the testing phase.

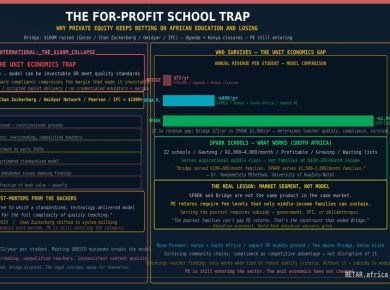

Nigeria’s bill contains no equivalent provision. There is no sandbox tier, no innovation carve-out for early-stage companies, and no phased implementation schedule that would give resource-constrained startups time to build compliant systems before enforcement begins. BETAR noted in its Nigeria AI Bill analysis that the compliance cost asymmetry — tolerable for a Series A fintech, potentially existential for a seed-stage startup — reflected a framework designed with large organisations in mind.

Kenya’s sandbox provision does not solve that asymmetry, but it creates a mechanism for managing it. A startup that cannot yet meet full compliance requirements for a high-risk AI deployment has a route to operate legally while it builds toward them. That pathway does not exist under the Nigerian framework as currently written.

What the African AI Regulatory Wave Looks Like Now

Kenya and Nigeria are not alone. South Africa’s draft National AI Policy is expected to be gazetted for public consultation this quarter, with finalisation targeted for the 2026/2027 financial year. Morocco’s Digital X0 framework includes AI governance provisions. Ghana and Rwanda have indicated legislative intent. The question is no longer whether African countries will regulate AI, but which architectural choices will dominate the continent’s emerging patchwork.

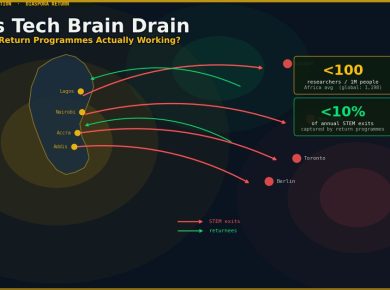

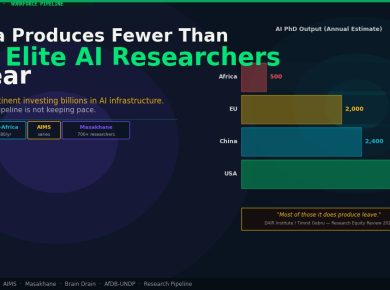

The early pattern suggests divergence rather than harmonisation. Nigeria chose single-regulator, post-hoc enforcement, no sandbox. Kenya is proposing tripartite governance, prior approval, with sandboxes. South Africa’s sector-specific multi-regulator model differs from both. The African Union’s continental AI strategy, developed in partnership with Google and framed around data protection as the foundational layer, may provide eventual convergence glue — but that timeline is measured in years, not months.

For an African founder building AI-native products across multiple markets, the current moment requires country-by-country compliance mapping as a first-order business problem. The compliance architecture in Nairobi will not map cleanly onto Lagos, and neither will map onto Johannesburg. That fragmentation is itself a regulatory outcome — one that advantages well-capitalised incumbents over early-stage challengers, and global platforms over local builders.

Kenya’s AI Bill has not yet passed. It will go through committee, face amendments, and likely emerge in a modified form. But the architecture it proposes — Commissioner-led, prior-approval-gated, sandbox-enabled — already establishes the terms of a policy debate that will run for years. Whether Silicon Savanna’s developers end up with a framework that enables the AI economy or merely audits it is the question Kenya’s legislative process now has to answer.

— Technology Desk, BETAR.africa